CISC 6000: Deep Learning Generative models

深度学习exam代考 As we discussed in class, FID measures the quality of your VAE model. Appendix at the end of this assignment provides a

1. (20 points) Show that KL-divergence is 深度学习exam代考

(a) always positive.

(b) not symmetric.

2. (40 points) Variational Autoencoder in Image Generation

In this exercise, you will implement a generative model (VAE) to reconstruct/generate facial images. The dataset is a subset of CelebA dataset which consists of celebrity faces. Data are provided as follows:

• Training: under the celebra\train directory.

• Test: under the celebra\test directory.

We strongly recommend using GPU for training. It takes about 40 minutes to train your model with GPU.

Deliverables 深度学习exam代考

(a) Design, implement and train your VAE with the following constraints:

• Downsize the training and test images to 128 × 128 (for computational efficiency)

• Total number of weights between 500,000 and 800,000

• Activation function can only be ReLU, LeakyReLU, Sigmoid or Tanh

• At least 3 convolutional layers.

• Batch size greater than 250

• Use Xavier or He initialization 深度学习exam代考

• Re-construction loss should be L2

• Latent vector size = 128

i. Present your model’s summary.

ii. Plot first ten test images, corresponding latent vectors, and reconstructed images using your trained model.

iii. Plot ten generated images by randomly sample the latent vectors.

(b) Modify and train your VAE using L1 as the reconstruction loss. Keep other parameters the same.

i. Re-plot first ten test images, corresponding latent vectors, and reconstructed images using your trained model.

ii. Plot ten generated images by randomly sample the latent vectors.

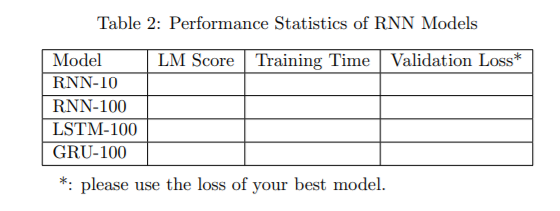

(c) As we discussed in class, FID measures the quality of your VAE model. Appendix at the end of this assignment provides a getFID function to help you calculate this quantity.

i. Obtain as set of reconstructed images from the test images. Calculate the FID using the test images and the reconstructed images. Fill in column #1 in Table 1. (Note: the scores should be less than 100).

ii. Randomly sample from the latent vectors and generate 1500 new images. Calculate the FID using the test images and the generated images. Fill in column #2 in Table

1. (Note: the scores should be less than 170). 深度学习exam代考

3. (40 points) Recurrent Neural Network

In this exercise, you will train a character-based language model using the novel Anna Karenina by Leo Tolstoy.

Build your own vocabulary set by extracting all distinct characters from the novel. Make

sure you keep all lower, upper characters, punctuations and special characters (e.g., newline).

Sort your vocabulary in alphabetical order.

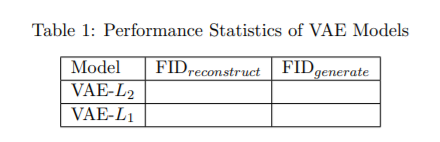

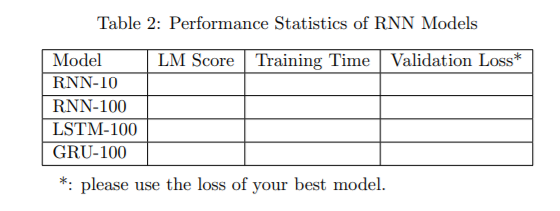

As we discussed in class, the Language Modeling (LM) score measures the quality of your language model. Appendix at the end of this assignment provides a lmscore function to help you calculate this quantity.

(a) Design, implement and train your RNN with the following constraints:

• Use one-hot encoding for the input characters

• One hidden layer of size 512 and one dense layer

• Sequence length = 10

• Batch size = 512

Deliverables: 深度学习exam代考

i. Present your model summary.

ii. Plot your model diagram using the plot_model function in Keras

iii. Generate your own novel by giving a start token of your own choice. Limit your generated text to less than 500 characters.

iv. Fill in row #1 in Table 2.

(b) Increasing the training sequence length to 100 and keep everything else the same. Retrain your model. Deliverables:

i. Generate your novel by giving a start token of your own choice. Limit your generated text to less than 500 characters.

ii. Train the same model using LSTM and GRU and re-generate your novel using the same token as in (i).

iii. Fill in row #2 to #4 in Table 2.

Appendix 深度学习exam代考

You can use the following functions to get the FID and LM scores.

In order to use these functions, you must turn on the Internet option in Kaggle.

Calculate FID

from utils import getFID

getFID(real_images, generated_images) # Input should be two numpy arrays.

Calculate LM score

from utils import lmScore

lmscore(text) # Input should be a string.

发表回复

要发表评论,您必须先登录。